Source

Psychology Today

Summary

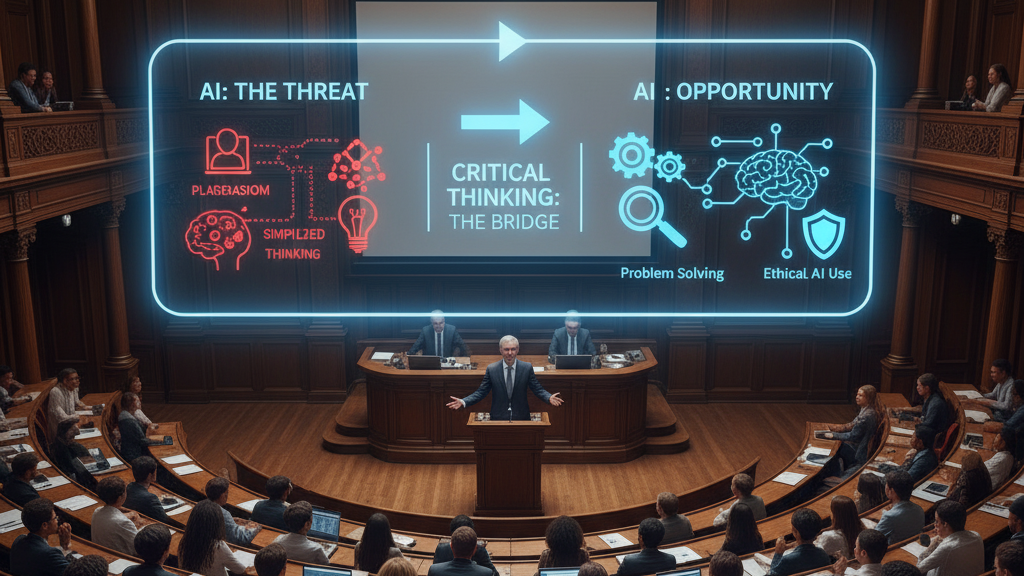

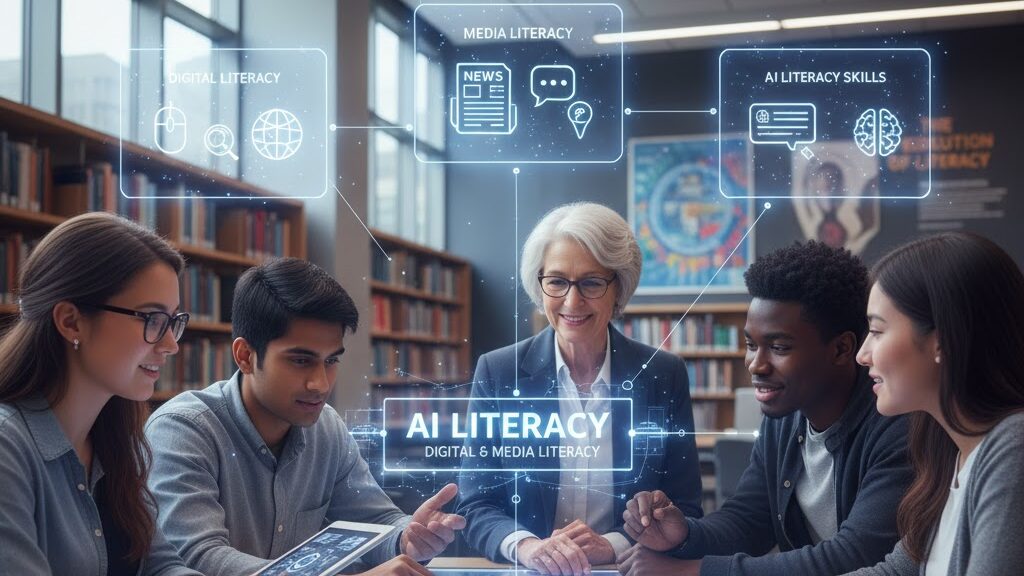

Diana E. Graber argues that “AI literacy” is not a new concept but a continuation of long-standing digital and media literacy principles. Triggered by the April 2025 executive order Advancing Artificial Intelligence Education for American Youth, the sudden focus on AI education highlights skills schools should have been teaching all along—critical thinking, ethical awareness, and responsible participation online. Graber outlines seven core areas where digital and media literacy underpin AI understanding, including misinformation, digital citizenship, privacy, and visual literacy. She warns that without these foundations, students face growing risks such as deepfake abuse, data exploitation, and online manipulation.

Key Points

- AI literacy builds directly on digital and media literacy foundations.

- An executive order has made AI education a US national priority.

- Core literacies—critical thinking, ethics, and responsibility—are vital for safe AI use.

- Key topics include misinformation, cyberbullying, privacy, and online safety.

- The article urges sustained digital education rather than reactionary AI hype.

Keywords

URL

Summary generated by ChatGPT 5