Source

BBC News

Summary

Academics at De Montfort University (DMU) in Leicester are receiving specialist training to identify when students misuse artificial intelligence in coursework. The initiative, led by Dr Abiodun Egbetokun and supported by the university’s new AI policy, seeks to balance ethical AI use with maintaining academic integrity. Lecturers are being taught to spot linguistic “markers” of AI generation, such as repetitive phrasing or Americanised language, though experts acknowledge that detection is becoming increasingly difficult. DMU encourages students to use AI tools to support critical thinking and research, but presenting AI-generated work as one’s own constitutes misconduct. Staff also highlight the flaws of AI detection software, which has produced false positives, prompting calls for education over punishment. Students, meanwhile, recognise both the value and ethical boundaries of AI in their studies and future professions.

Key Points

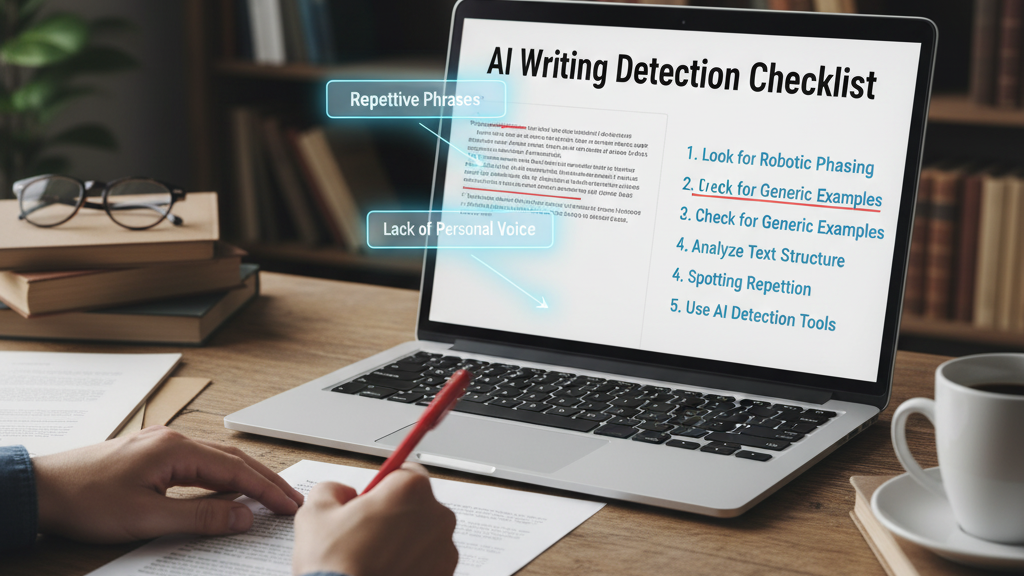

- DMU lecturers are being trained to recognise signs of AI misuse in student work.

- The university’s policy allows ethical AI use for learning support but bans misrepresentation.

- Detection focuses on linguistic patterns rather than unreliable software tools.

- Staff warn that false accusations can harm students as much as confirmed misconduct.

- Educators stress fostering AI literacy and integrity rather than “catching out” students.

- Students value AI for translation, study support, and clinical applications but accept clear ethical limits.

Keywords

URL

https://www.bbc.com/news/articles/c2kn3gn8vl9o

Summary generated by ChatGPT 5