Source

CNET

Summary

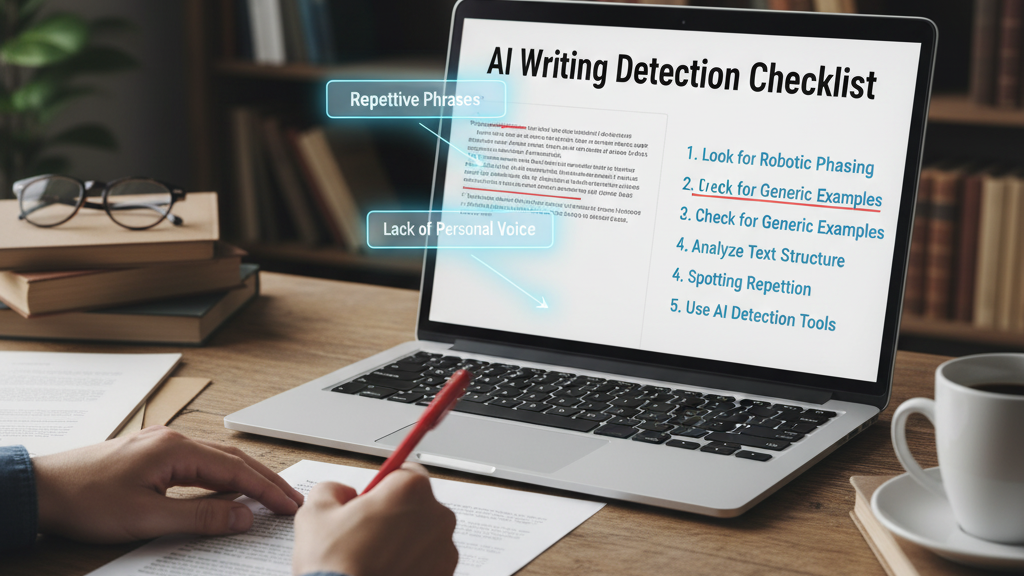

CNET offers a practical guide for spotting AI-generated writing. It highlights typical cues: prompts embedded openly in the text, overly generic or ambiguous language, unnatural transitions, repetition, and lack of depth or specificity. The article suggests that when a piece echoes the original assignment prompt too directly, that’s a red flag. While no single cue is definitive, combining several tells (tone flatness, formulaic structure, prompt residue) increases confidence that AI was involved. The aim isn’t accusation but raising readers’ critical sensitivity toward AI authorship.

Key Points

- AI text often includes remnants of the assignment prompt verbatim.

- It tends to use generic, vague, or ambivalent phrasing more often than human writers.

- Repetitive patterns, smooth transitions, and “flat” tone are common signals.

- Contextual depth, original insight, nuance, and emotional detail are often muted.

- Use a cluster of clues rather than relying on one signal to infer AI writing.

Keywords

URL

https://www.cnet.com/tech/services-and-software/use-these-simple-tips-to-detect-ai-writing/

Summary generated by ChatGPT 5