by Tadhg Blommerde – Assistant Professor, Northumbria University

Estimated reading time: 5 minutes

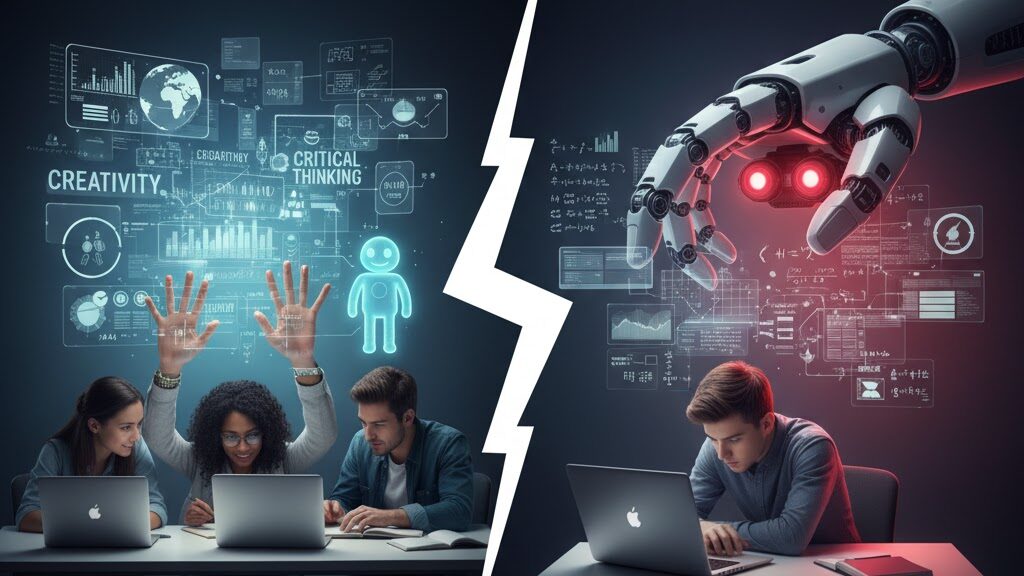

There is a scene I have witnessed many times in my classroom over the last couple of years. A question is posed, and before the silence has a chance to settle and spark a thought, a hand shoots up. The student confidently provides an answer, not from their own reasoning, but read directly from a glowing phone or laptop screen. Sometimes the answer is wrong and other times it is plausible but subtly wrong, lacking the specific context of our course materials. Almost always the reasoning behind the answer cannot be satisfactorily explained. This is the modern classroom reality. Students arrive with generative AI already deeply embedded in their personal lives and academic processes, viewing it not as a tool, but as a magic machine, an infallible oracle. Their initial relationship with it is one of unquestioning trust.

The Illusion of the All-Knowing Machine

Attempting to ban this technology would be a futile gesture. Instead, the purpose of my teaching became to deliberately make students more critical and reflective users of it. At the start of my module, their overreliance is palpable. They view AI as an all-knowing friend, a collaborator that can replace the hard work of thinking and writing. In the early weeks, this manifests as a flurry of incorrect answers shouted out in class, the product of poorly constructed prompts fed into (exclusively) ChatGPT, and a complete faith in the response it generated. It was clear there was a dual deficit: a lack of foundational knowledge on the topic, and a complete absence of critical engagement with the AI’s output.

Remedying this begins not with a single ‘aha!’ moment, but through a cumulative, twelve-week process of structured exploration. I introduce a prompt engineering and critical analysis framework that guides students through writing more effective prompts and critically engaging with AI output. We move beyond simple questions and answers. I task them with having AI produce complex academic work, such as literature reviews and research proposals, which they would then systematically interrogate. Their task is to question everything. Does the output actually adhere to the instructions in the prompt? Can every claim and statement be verified with a credible, existing source? Are there hidden biases or a leading tone that misrepresents the topic or their own perspective?

Pulling Back the Curtain on AI

As they began this work, the curtain was pulled back on the ‘magic’ machine. Students quickly discovered the emperor had no clothes. They found AI-generated literature reviews cited non-existent sources or completely misrepresented the findings of real academic papers. They critiqued research proposals that suggested baffling methodologies, like using long-form interviews in a positivist study. This process forced them to rely on their own developing knowledge of module materials to spot the flaws. They also began to critique the writing itself, noting that the prose was often excessively long-winded, failed to make points succinctly, and felt bland. A common refrain was that it simply ‘didn’t sound like them’. They came to realise that AI, being sycophantic by design, could not provide the truly critical feedback necessary for their intellectual or personal growth.

This practical work was paired with broader conversations about the ethics of AI, from its significant environmental impact to the copyrighted material used in its training. Many students began to recognise their own over-dependence, reporting a loss of skills when starting assignments and a profound lack of satisfaction in their work when they felt they had overused this technology. Their use of the technology began to shift. Instead of a replacement for their own intellect, it became a device to enhance it. For many, this new-found scepticism extended beyond the classroom. Some students mentioned they were now more critical of content they encountered on social media, understanding how easily inaccurate or misleading information could be generated and spread. The module was fostering not just AI literacy, but a broader media literacy.

From Blind Trust to Critical Confidence

What this experience has taught me is that student overreliance on AI is often driven by a lack of confidence in their own abilities. By bringing the technology into the open and teaching them to expose its limitations, we do more than just create responsible users. We empower them to believe in their own knowledge and their own voice. They now see AI for what it is: not an oracle, but a tool with serious shortcomings. It has no common sense and cannot replace their thinking. In an educational landscape where AI is not going anywhere, our greatest task is not to fear it, but to use it as a powerful instrument for teaching the very skills it threatens to erode: critical inquiry, intellectual self-reliance, and academic integrity.

Tadhg Blommerde

Assistant Professor

Northumbria University

Tadhg is a lecturer (programme and module leader) and researcher that is proficient in quantitative and qualitative social science techniques and methods. His research to date has been published in Journal of Business Research, The Service Industries Journal, and European Journal of Business and Management Research. Presently, he holds dual roles and is an Assistant Professor (Senior Lecturer) in Entrepreneurship at Northumbria University and an MSc dissertation supervisor at Oxford Brookes University.

His interests include innovation management; the impact of new technologies on learning, teaching, and assessment in higher education; service development and design; business process modelling; statistics and structural equation modelling; and the practical application and dissemination of research.