by Jim O’Mahony, SFHEA – Munster Technological University

Estimated reading time: 5 minutes

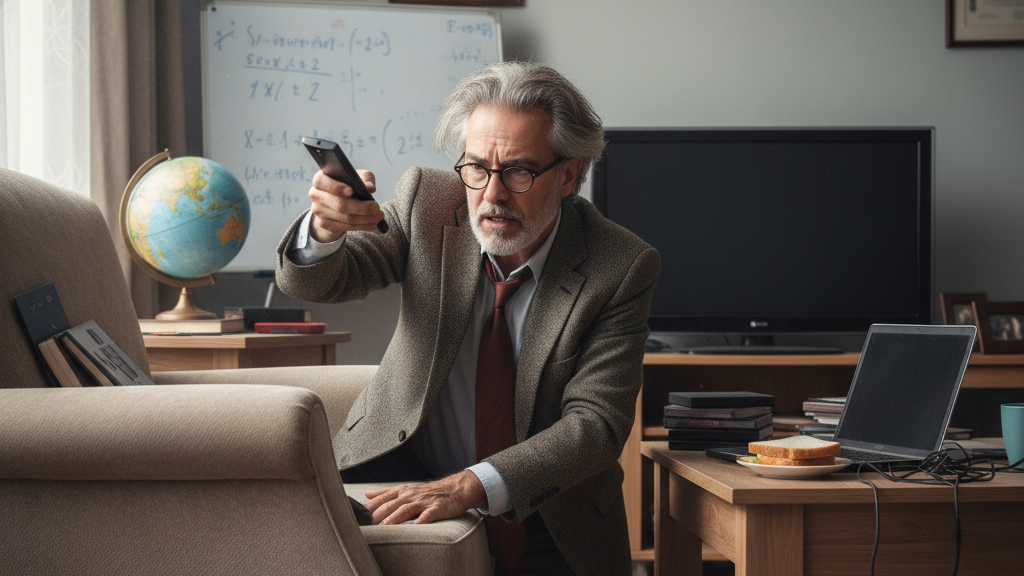

I remember as a 7-year-old having to hop off the couch at home to change the TV channel. Shortly afterwards, a futuristic-looking device called a remote control was placed in my hand, and since then I have chosen its wizardry over my own physical ability to operate the TV. Why wouldn’t I? It’s reliable, instant, multifunctional, compliant and most importantly… less effort.

The Seduction of Less Effort

Less effort……….as humans, we’re biologically wired for it. Our bodies will always choose energy saving over energy-consuming, whether that’s a physical instance or a cognitive one. It’s an evolutionary aid to conserve energy.

Now, my life hasn’t been impaired by the introduction of a remote control, but imagine for a minute if that remote control replaced my thinking as a 7-year-old rather than my ability to operate a TV. Sounds fanciful, but in reality, this is exactly the world in which our students are now living in.

Within their grasp is a seductive all-knowing technological advancement called Gen AI, with the ability to replace thinking, reflection, metacognition, creativity, evaluative judgement, interpersonal relationships and other richly valued attributes that make us uniquely human.

Now, don’t get me wrong, I’m a staunch flag bearer for this new age of Gen AI and can see the unlimited potential it holds for enhanced learning. Who knows? Someday, it may even solve Bloom’s 2 sigma problem through its promise of personalised learning?

Guardrails for a New Age

However, I also realise that as the adults in the room, we have a very narrow window to put sufficient guardrails in place for our students around its use, and urgent considered governance is needed from University executives.

Gen AI literacy isn’t the most glamorous term (it may not even be the most appropriate term), but it encapsulates what our priority as educators should be. Learn what these tools are, how they work, what are the limitations, problems, challenges, pitfalls, etc, and how can we use them positively within our professional practice to support rather than replace learning?

Isn’t that what we all strive for? To have the right digital tools matched with the best pedagogical practices so that our students enter the workforce as well-rounded, fully prepared graduates – a workforce by the way, that is rapidly changing, with more than 71% of employers routinely adopting Gen AI 12 months ago (we can only imagine what it is now).

Shouldn’t our teaching practices change then, to reflect the new Gen AI-rich graduate attributes required by employers? Surely, the answer is YES… or is it? There is no easy answer – and perhaps no right answer. Maybe we’ve been presented with a wicked problem – an unsolvable situation where some crusade to resist AI, and others introduce policies to ‘ban the banning’ of AI! Confused anyone?

Rethinking Assessment in a GenAI World

I believe a common-sense approach is best and would have us reimagine our educational programmes with valid, secure and authentic assessments that reward learning both with and without the use of Gen AI.

Achieving this is far from easy, but as a starting point, consider a recent paper from Deakin University, which advocates for structural changes to assessment design along with clearly communicated instructions to students around Gen AI use.

To facilitate a more discursive approach regarding reimagined assessment protocols, some universities are adopting ‘traffic light systems’ such as the AI Assessment scale, which, although not perfect (or the whole solution), at least promotes open and transparent dialogue with students about assessment integrity – and that’s never a bad thing.

The challenge will come from those academics who resist the adoption of Gen AI in education. Whether their reasons relate to privacy, environmental issues, ethics, inherent bias, AGI, autonomous AI or cognitive offloading concerns (all well-intentioned and entirely valid by the way), Higher Ed debates and decision making around this topic in the coming months will be robust and energetic.

Accommodating the fearful or ‘traditionalist educators’ who feel unprepared or unwilling to road-test Gen AI should be a key part of any educational strategy or initiative. Their voices should be heard and their opinions considered – but in return, they also need to understand how Gen AI works.

From Resistance to Fluency

Within each department, faculty, staffroom, T&L department – even among the rows of your students, you will find early adopters and digital champions who are a little further along this dimly lit path to Gen AI enlightenment. Seek them out, have coffee with them, reflect on their wisdom and commit to trialling at least one new Gen AI tool or application each week – here’s a list of 100 to get you started. Slowly build your confidence, take an open course, learn about AI fluency, and benefit from the expertise of others.

I’m not encouraging you to be an AI evangelist, but improving your knowledge and general AI capabilities will make you better able to make more informed decisions for you and your students.

Now, did anyone see where I left the remote control?

Jim O’Mahony

University Professor | Biotechnologist | Teaching & Learning Specialist

Munster Technological University

I am a passionate and enthusiastic University lecturer with over 20 years experience of designing, delivering and assessing undergraduate and postgraduate programmes. My primary focus as an academic is to empower students to achieve their full potential through innovative educational strategies and carefully designed curricula. I embrace the strategic and well-intentioned use of digital tools as part of my learning ethos, and I have been an early adopter and enthusiastic advocate of Artificial Intelligence (AI) as an educational tool.

Links

Jim also runs a wonderful newsletter on LinkedIn

https://www.linkedin.com/newsletters/ai-simplified-for-educators-7366495926846210052/